Angelica Martinez, a writer from BuzzFeed, recently embarked on an intriguing exploration into the world of artificial intelligence and its applications in image generation. Utilizing Midjourney, an advanced AI tool capable of generating lifelike images, she sought to depict a typical person from a variety of professions. The list of vocations was diverse, including astronauts, doctors, influencers, baristas, and several more.

In the visually stunning series of AI-Generated professional portraits created by the AI, each image appeared glossy and idealized, with every professional looking like they’d just stepped off the set of a Hollywood movie or a glamorous photo shoot. But beneath the initial glamour, the series opens up interesting conversations about AI, its interpretations, and the potential biases in the training data used.

ACCOUNTANTS

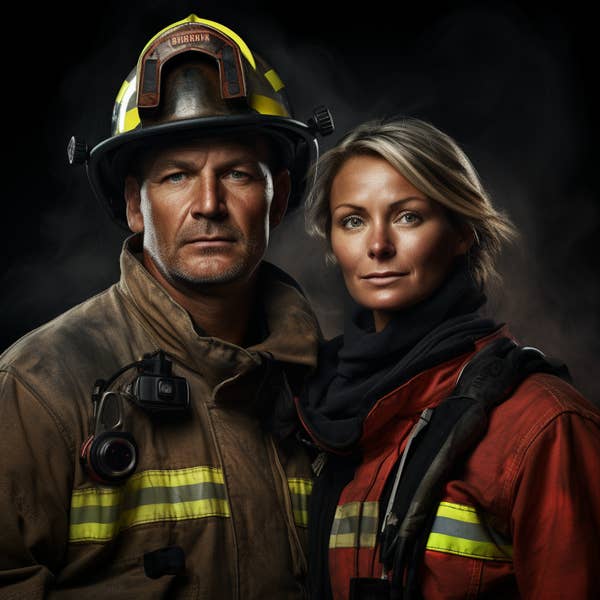

Firstly, it was striking how uniformly polished and well-presented the AI’s perception of an average professional was. Whether they were doctors donning their white coats or astronauts ready for a space mission, each person was remarkably fit, groomed to perfection, and devoid of a single hair out of place. Firefighters donned their gear with an air of effortless confidence, dentists flashed their bright, infectious smiles, and news anchors delivered smoldering glances at the camera as if they were born for the limelight.

Upon deeper examination, however, the AI’s visual narratives started to unravel. Odd choices in the image generation sparked several questions about the source material used for training the AI and the stereotypical assumptions that might have influenced the AI’s decisions.

ACTORS

ASTRONAUTS

Take, for instance, the image of the astronauts. They exuded an intense, almost severe demeanor. The female astronaut, in particular, looked like she was caught in a moment of surprise or alarm. It was an unexpected twist on an image one would anticipate being more focused, composed, and calm. This portrayal brought into question the kind of data that was used to inform the AI’s concept of an astronaut and if it was sufficiently diverse and representative.

Similarly, the actors generated by the AI appeared straight out of a 1930s period drama. Dressed in attire reminiscent of an era long past, their depiction starkly contrasted with the modern, everyday reality of acting professionals. This seemed to suggest that the AI had a skewed understanding of the profession, likely rooted in the specific data it was fed.

AUTHORS

Another interesting anomaly was the image of the authors. Their portrayal was a clichéd stereotype, bordering on caricature. Dressed in typical hipster fashion, complete with glasses that radiated a subtle but undeniable literary greatness, the authors looked like they had been plucked from a popular culture notion of writers, rather than an accurate representation.

The AI’s take on morticians was equally fascinating. The generated images of morticians appeared to have time-traveled from the late 19th century. They bore little resemblance to contemporary morticians, bringing to the forefront the issue of historical accuracy in AI image generation and the potential pitfalls of over-reliance on certain types of training data.

BARISTAS

However, one of the most striking patterns across the AI-generated series was the apparent age bias. The images were overwhelmingly youthful, with only a handful of exceptions. In science-related professions and teaching, we did see older professionals, but they were notably in the minority. The sole middle-aged woman in the series was a teacher, opening up questions about the apparent age and gender biases that may have infiltrated the AI’s learning data.

LANDSCAPERS

This underrepresentation of older individuals in the professional landscape as generated by the AI suggests a potential bias in the input data, which perhaps leans towards younger individuals. This raises concerns about the inclusivity and fairness of AI and the need to ensure that the training data used reflects a diverse, equitable, and accurate picture of our society.

CHEFS

The project, while visually appealing, ultimately serves as a reminder of the limitations of AI. It emphasizes the fact that AI often tends to reinforce stereotypes and is only as good as the data it has been trained on. With the increasing integration of AI into our daily lives, this serves as a crucial reminder to approach AI visuals with a critical eye and to maintain an ongoing conversation about the ethics and implications of AI usage.

DELIVERY DRIVERS

It invites us to view these images not just as a testament to the impressive capabilities of AI, but also as a mirror reflecting the biases that can unknowingly creep into AI systems. It challenges us to do better in ensuring diversity and inclusivity in our AI models and to constantly strive to improve the accuracy and fairness of AI outputs.

To fully appreciate the project’s scope, there’s an invitation to explore all 36 images and form your own opinions. Look beyond the polished veneer of each image and contemplate how accurately or inaccurately the AI has captured the essence of each job. It’s a fascinating exercise, one that invites us all to participate in a broader dialogue about the role of AI in our society and its potential impacts on our perception of the world around us.

DENTISTS

DOCTORS

ENGINEERS

FASHION DESIGNERS

FAST FOOD EMPLOYEES

FIREFIGHTERS

FLIGHT ATTENDANTS

INFLUENCERS

JOURNALISTS

LAWYERS

MAKEUP ARTISTS

MARKETING MANAGERS

MORTICIANS

NEWS ANCHORS

NURSES

PEDIATRICIANS

PERSONAL TRAINERS

PILOTS

PUBLICISTS

REAL ESTATE AGENTS

RETAILS EMPLOYEES

SCIENTISTS

SOFTWARE DEVELOPERS

TATTOO ARTISTS

TEACHERS

THERAPISTS

VETERINARIANS

WAITERS